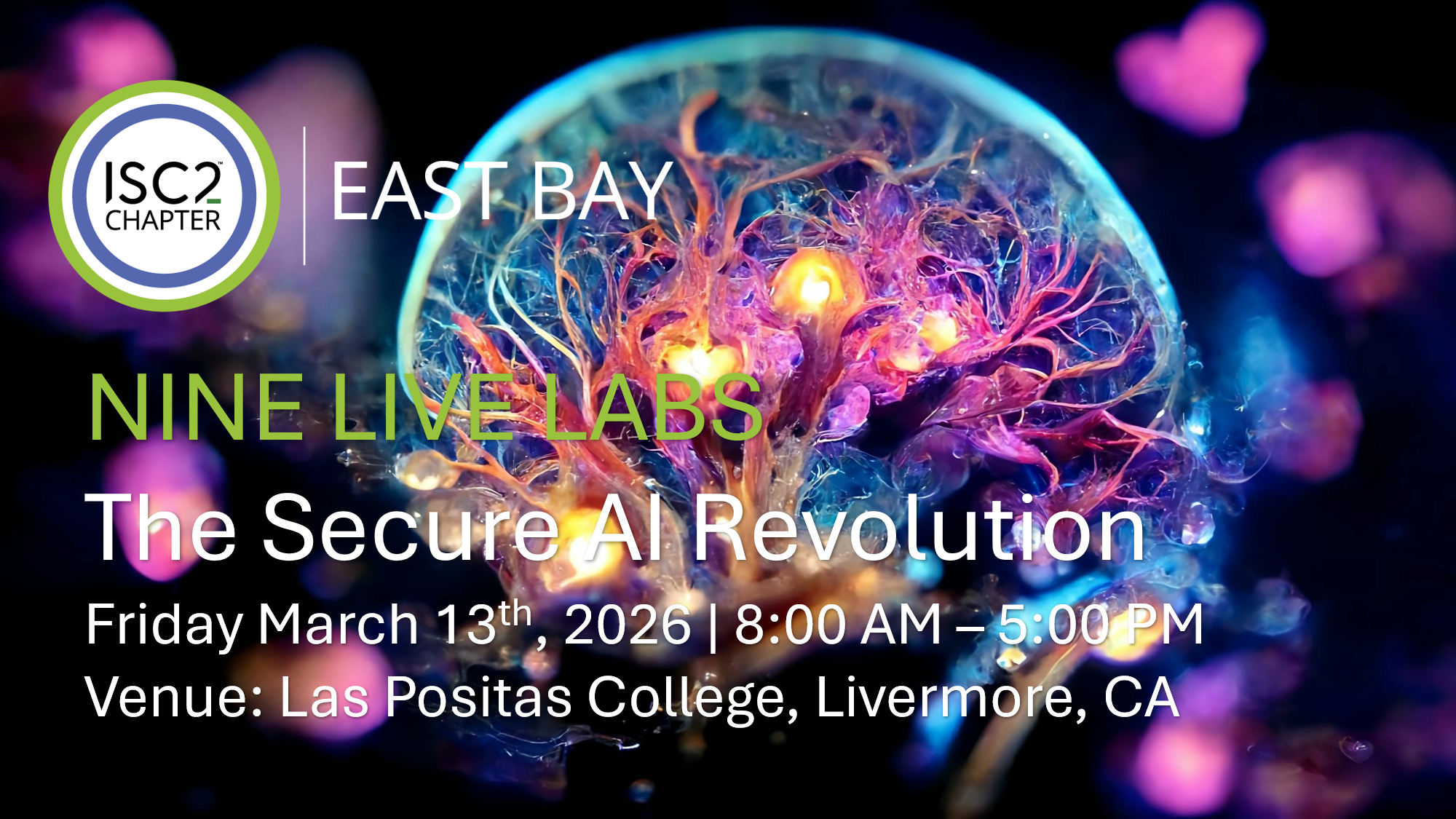

Venue: Las Positas College, 3000 Campus Loop, Bld 1000, Livermore, CA

Date and Time: March 13, 2026 | 8:00 AM – 5:00 PM

The Secure AI Revolution: A Practical Blueprint for Developers and Cybersecurity Auditors to Master the New AI Development Lifecycle

With its primary focus on Application Security and Development in the Age of AI, this event examines the urgent shift required in workforce education. We re-emphasize critical SDLC guardrails often overlooked amidst the excitement of novel AI capabilities—such as the risks of VIBE Coding—while addressing the emerging security concerns of autonomous systems through practical, hands-on learning.

Conference Keynotes and Labs explore the transformative impact of resource-conscious AI development, including RAG and low-cost fine-tuning, on the modern enterprise attack surface. Our curriculum provides rigorous training to secure every stage of the AI development lifecycle: from preventing Data Poisoning and Prompt Injection to defending against the new frontier of Agentic AI vulnerabilities. Attendees will master the transition from the OWASP LLM Top 10 to the OWASP Top 10 for Agentic AI, learning to mitigate Excessive Agency, System Prompt Leakage, and Unauthorized Tool Execution.

OWASP Top Ten Leaders are heavily represented at this conference: Learn More <https://genai.owasp.org/resource/al-security-solutions-landscape-for-llm-and-gen-al-apps-q2-q3-2025/>

The Nine Live Labs format is designed to maximize practical engagement, offering a deep dive into the “Action Layer” of AI through intensive sessions led by our Lab Content Partners. Attendees will participate in three separate lab tracks using live, enterprise-grade software stacks and production-ready environments, gaining hands-on experience with specialized platforms to identify and remediate vulnerabilities in real-time.

Beyond the lab environment, the conference features a Full Gallery of Exhibitors, providing dedicated time for attendees to interact with a diverse range of industry leaders and subject matter experts. This collaborative ecosystem ensures every participant can explore a comprehensive array of security solutions and build a robust defense strategy for the future of autonomous technology.

Continuing Professional Education (CPE) Credits – Full Conference Pass: 8 CPEs; Networking Pass: 5 CPEs; Speakers & Volunteers: Up to 13 CPEs

Pricing and Registration

| Student (Full-time) goes on sale February 14, 2026. | ||

| Member Full Conference Pass | $125.00 | |

| Guest Full Pass (Non-Member) | $175.00 | |

| Networking Pass (1 PM – 5 PM) | $85.00 | |

| Student (Full-time) | $45.00* | *must have proof of student enrollment |

Apply Your Discount at Checkout – ISC2 East Bay Members: Use your registered member email address in the promo code section during checkout to unlock member rates. Ensure your annual chapter dues are current to qualify.

Partner Chapters and Organizations: Enter the unique one-time code provided to you by your organization in the promo code box and hit APPLY to unlock the member rate. Remember to add (+) the tickets so they are visible for checkout. If your partner organization has not communicated your chapter code, please reach out to them directly and copy conferences@isc2-eastbay-chapter.org. You may be asked to prove your membership or student status.

Conference Sessions & Schedule

8:00 AM Breakfast and Registration

8:50 AM Greetings from the ISC2 East Bay Chapter

Welcome, Students, Entrepreneurs, Civic, Business, and Education Leaders, Cyber Professionals, and Job Seekers. We are pleased to share a brief discussion of the ISC2 East Bay Chapter Mission, Rules for our day at Las Positas, expectations for the “In The Bag” activity, and a reminder about your mandatory feedback requirement.

Keynote Speakers

Keynote One – 9:00 AM to 9:45 AM | Malcolm Harkins – Chief Security and Trust Officer, HiddenLayer – Presenting: Economic Impact of Securing AI

Malcolm Harkins is the Chief Security and Trust Officer at HiddenLayer, where he focuses on securing AI models and data. He previously held the role of Chief Security and Trust Officer at Cylance. Prior to that, he was the VP and Chief Security and Privacy Officer (CSPO) at Intel, leading the company’s security, privacy, and trust efforts. Malcolm is a respected voice on risk-based security and governance, promoting a balanced approach to managing risk, cost, and trust. He is an award-winning leader in the field of information security.

Keynote Two – 3:15 PM to 4:00 PM | Neil Daswani, Ph.D. – CISO-In-Residence, Firebolt Ventures; Co-Director, Stanford Advanced Cybersecurity Certification Program – Presenting: Beyond Big Breaches: AI Attacks Are Here

Dr. Neil Daswani is a CISO-in-Residence at Firebolt Ventures and is Co-Academic Director of Stanford’s Advanced Cybersecurity Program. After completing his PhD at Stanford University and leading security initiatives at Google, he co-founded Dasient, a cybersecurity company that Google Ventures invested in and was acquired by Twitter/X. After his time at Twitter, he served as CISO of multiple public companies, including LifeLock, Symantec’s Consumer Business Unit, and QuantumScape. These days, he advises multiple venture capital funds and is focused on both securing artificial intelligence and using AI for cybersecurity applications. He has co-authored two books, “Big Breaches: Cybersecurity Lessons for Everyone” and “Foundations of Security: What Every Programmer Needs to Know.” Dr. Daswani holds over a dozen patents, has published dozens of technical articles, and speaks frequently at top industry events. He earned a PhD and MS in computer science from Stanford, and his career journey started with earning a BS in computer science with honors and distinction from Columbia University.

Stanford Instructor – Meet Neil Daswani

AI Development Life Cycle (AIDLC) Security Framework – Integrated Risk & Lab Alignment (9-Lab Sequence)

The AIDLC framework provides a structured approach to securing AI systems by mapping specific risks to development stages and practical lab exercises. This document tracks the evolution of these mappings to ensure comprehensive coverage across LLM and Agentic AI security.

Core Security Pillars

- Integrity: Ensuring the model and data remain untampered.

- Delivery: Securing the interface between the AI and the user/system.

- Monitoring: Real-time observation of AI behavior and resource usage.

🧪 Combined Cybersecurity Tie-out: LLM & Agentic AI (this is a suggestive model. Labs can cover all OWASP LLM and Agentic Risk; however, we expect these topics at a minimum.)

| AIDLC Stage / Track | OWASP LLM Risk (App Layer) | OWASP Agentic Risk (Action Layer) | Relevant Lab Assignment |

|---|---|---|---|

| Stage 1: Pre-Deployment Security | LLM01: Prompt Injection | ASI-01: Direct/Indirect Manipulation | Lab 1-1: HiddenLayer |

| Track Focus: Model & Data Integrity | LLM04: Data and Model Poisoning | ASI-03: Knowledge Base Poisoning | Lab 2-1: Netskope |

| Focus: Securing the supply chain, model weights, and training data. | LLM05: Improper Output Handling | ASI-08: Excessive Agency | Lab 3-1: Kratikal |

| Stage 2: Deployment & API Security | LLM09: Overreliance | ASI-09: Uncritical Plan Execution | Lab 4-2: Intezer |

| Track Focus: Delivery & Access | LLM02: Sensitive Information Disclosure | ASI-04: PII Leakage in Comms, ASI-02: Agentic Hijacking | Lab 5-2: Veria Labs |

| Focus: Agentic guardrails, MCP Security, AI BOMs and Chatbot red-teaming | Covers all LLM Top Ten | Covers all ASI Top Ten | Lab 6-2: Snyk |

| Stage 3: Runtime & Post-Exploit | LLM10: Unbounded Consumption LLM08: Vector and Embedding Weaknesses | ASI-05: Exfiltration of Memory/Logs ASI-10: Resource Exhaustion | Lab 7-3: Stellar Cyber |

| Track Focus: Monitoring & Response | LLM06: Model Utility & Alignment & LLM09 | ASI-01 (Agentic Prompt Injection) | ASI-02: Agentic Hijacking | Lab 8-3: Mavs AI |

| Focus: Live detection of abuse, exfiltration, and resource exhaustion. | LLM07: System Prompt Leakage | ASI-07: Unauthorized Tool Execution | Lab 9:-3 Corelight |

Track 1: Pre-Deployment Security (Labs 1, 2, 3): Focus on securing the model’s source and code integrity.

Session 1 – Lab one – 10:00 AM – 11:00 AM | HiddenLayer | RAG Rampage: Hands-On Prompt Injection and Defense, Jason Martin

Primary Risks: [LLM01: Prompt Injection] / [ASI-01: Direct/Indirect Manipulation] | LAB TA is Arya Hamid

- 00-10 min: Introduction to the RAG architecture and the specific “Action Layer” being targeted.

- 10-25 min: Exercise 1: Executing a direct prompt injection to bypass system instructions.

- 25-45 min: Exercise 2: Exploiting Indirect Prompt Injection via poisoned external data sources.

- 45-60 min: Hardening phase—implementing HiddenLayer guardrails and verifying the fix.

(Connecting LLM01 to ASI-01): Explain how prompt injection leads to unauthorized action layer manipulation. Prompt injections can occur in multiple input streams in modern agentic applications, any of which can hijack agent behavior and abuse available tools to manipulate actions, such as unauthorized changes to data, data exfiltration, or denial of service of the application.

About Jason Martin

Jason Martin is Director of Adversarial Research at HiddenLayer, where he explores how the latest AI security research intersects with practical application. Jason was amongst the earliest researchers to recognize the need for AI security, founding the Secure Intelligence Team in Intel Labs in 2016 to research AI security and privacy threats and defenses. For 20+ years, Jason has covered such diverse security topics as CPU microcode, authentication and biometrics, trusted execution environments, wearable technology, and network protocols, resulting in over 40 issued patents and several high-profile research papers in adversarial machine learning and federated learning. When he’s not working, Jason is either lost in the Pacific Northwest camping and hiking with his family, or he is lost in a technical project involving 3D printing, microcontrollers, or designing holiday lighting displays synchronized to music.

About HiddenLayer

HiddenLayer: HiddenLayer’s AI Security Platform secures agentic, generative, and predictive AI applications across the entire lifecycle, including AI discovery, AI supply chain security, AI attack simulation, and AI runtime security. Backed by patented technology and industry-leading adversarial AI research, HiddenLayer protects IP, ensures compliance, and enables safe adoption of AI at enterprise scale.

Session 1 – Lab two – 10:00 AM – 11:00 AM | Netskope | Governing the Agentic Surface, Bob Gilbert

The Educational Hook “Visibility is the first line of defense against the ‘Autonomous Ghost.’ If you cannot distinguish between a sanctioned corporate AI instance and a personal one, you cannot enforce an identity boundary. This lab demonstrates how to move from passive observation to active Intermediary Validation—ensuring that when an AI agent ‘plans’ a task, it cannot ‘execute’ that task using sensitive corporate data or unauthorized personal identities.”

Phase 1: Visibility and Risk Assessment

Objective: Discover what GenAI apps are currently in use and identify their risk levels.

- Cloud Discovery: Use SkopeIT to filter traffic by the “Generative AI” category.

- CCI Analysis: Inspect the Cloud Confidence Index (CCI) for apps like ChatGPT or Claude to view their security posture (e.g., “Do they use data for training?”).

- Shadow AI Identification: Identify “Unsanctioned” vs. “Sanctioned” apps and create a Custom Application Tag (e.g.,

GenAI-Sanctioned-List) for easier policy management.

Associated AI Risks:

- ASI04: Agentic Supply Chain Vulnerabilities – Using CCI to vet the reliability of the models and third-party plugins within the AI supply chain.

- ASI06: Memory & Context Poisoning – Identifying if the app allows data to be used for model training, which can lead to persistent context manipulation or data leakage.

- LLM04: Data and Model Poisoning – Pinpointing apps that lack “Opt-out” controls, potentially poisoning future model iterations with proprietary data.

Phase 2: Instance Awareness and Access Control

Objective: Prevent users from logging into personal AI accounts while allowing corporate-managed ones.

- App Instance Profiling: Create a specific App Instance for your corporate ChatGPT or Gemini account.

- Constraint Policy: Configure a Real-time Protection Policy that blocks logins to public instances (e.g.,

gmail.comlogins) while permitting corporate domains. - Dynamic Coaching: Implement a User Alert action. When a user first accesses a GenAI app, trigger a pop-up requiring them to “Acknowledge” the company’s AI usage policy before proceeding.

Associated AI Risks:

- ASI03: Identity & Privilege Abuse – Isolating the Non-Human Identity (NHI) to corporate accounts to prevent credential bleeding into personal AI sessions.

- ASI09: Human-Agent Trust Exploitation – Using the “Acknowledge” coach as a Human-in-the-Loop (HITL) circuit breaker to fight overreliance and anthropomorphic deception.

- LLM06: Excessive Agency – Enforcing instance boundaries to ensure that even if an agent has “Agency,” it is restricted to the corporate data plane.

Phase 3: Real-Time Data Protection (DLP)

Phase 1: Securing Gen AI App Usage

Objective: Stop sensitive data from leaking to personal instances of Gen AI apps

- Deployment: Understand the controls needed and how they are deployed

- Contextual Policy Enforcement: How to implement contextual policies that stop the bad and safely enable the good

- User Experience: See results of policy construction through the lens of an end-user

Associated AI Risks:

- LLM06: Excessive Agency – Enforcing instance boundaries to ensure that even if an agent has “Agency,” it is restricted to the corporate data plane.

Phase 2: Securing Agentic Interactions

Objective: Block threats like prompt injection

- AI Gateway: Deployed between agent and LLMs

- AI Gateway Policy: Configure a Real-time Protection Policy that enforces access controls, DLP, AI Guardrails

- Policy Trigger Results: See how AI Gateway policy impacts agentic transaction

Phase 3: Securing Private LLMs

Objective: Automate adversarial simulated attacks and uncover vulnerabilities

- AI Red Teaming Overview: Analyze overall results

- AI Red Teaming Test Rounds: Analyze finds from simulated attack on Amazon Bedrock

Lab Success Criteria – short quiz on your analysis and assessment of all three phases

Bob Gilbert is the Vice President of Strategy and Chief Evangelist at Netskope, a market-leading cloud security company. Bob is a prolific speaker and product demonstrator, reaching live audiences in more than 45 countries over the past decade. His career spans more than 25 years in Silicon Valley, where he has held leadership roles in product management and marketing at various technology companies. He was formerly the Chief Evangelist at Riverbed, where he was a member of the pioneering product team that launched Riverbed from a small start-up of less than 10 employees to a market leader with more than 3,000 employees and $1B in annual revenue.

Bob was first introduced to the world of cybersecurity as a teenager in the 80s when he hosted a BBS and had to develop his own terminal software to prevent Russian hackers from infiltrating his site, which was being hosted from his parents’ home.

Netskope (netskope.com) is a leader in modern security, networking, and analytics for the cloud and AI era. The unique architecture of its Netskope One platform enables real-time, context-based security for people, devices, and data wherever they go, and optimizes network performance—without trade-offs or sacrifices. Thousands of customers and partners trust the Netskope One platform, its patented Zero Trust Engine, and its powerful NewEdge Network to reduce risk, simplify converged infrastructure, and provide full visibility and control over cloud, AI, SaaS, web, and private application activity.

Session 1 – Lab three – 10:00 AM – 11:00 AM | Kratikal (subsidiary of ThreatCop) | AI and Application Security (SCA/IAST) | Pavan Kushwaha: CEO, Threatcop & Kratikal

Training Objectives: The VIBE Check – Auditing AI-Generated Code for Broken Agentic Agency

- Primary Risks: [LLM05: Improper Output Handling] / [ASI03: Identity & Privilege Abuse] / [ASI05: Unexpected Code Execution]

As enterprises adopt Agentic AI to automate DevOps and internal workflows, a dangerous “Vibe” has emerged: the uncritical acceptance of AI-generated code. This lab uses Kratikal’s simulation-first approach to prove that AI-generated snippets often lack Functional Authorization. Students will learn that when code looks correct but the logic is hallucinated or insecure, the result is Excessive Agency (AG08)—allowing autonomous agents to be hijacked by malicious inputs.

About Pavan Kushwaha: CEO, Threatcop & Kratikal

Pavan Kushwaha is a visionary entrepreneur and cybersecurity expert with over a decade of experience in building world-class security solutions. As the founder and CEO of both Threatcop and Kratikal, Pavan has pioneered people-centric security and advanced simulation technologies. His leadership has driven both organizations to the forefront of the industry, focusing on the intersection of human behavior, automated risk detection, and agentic AI security. He is a frequent speaker at global security summits and is dedicated to fostering the next generation of cybersecurity talent.

About Kratikal (A ThreatCop Subsidiary)

Kratikal Tech Limited is an AI-driven cybersecurity company focused on strengthening organisational resilience across people, processes, and technology. Through its product vertical, Threatcop, Kratikal delivers security awareness training, phishing simulations, and human risk management programs designed to reduce human-driven vulnerabilities. Kratikal is also recognised for deep expertise in Vulnerability Assessment & Penetration Testing (VAPT) across web, mobile, network, and cloud environments, along with industry-grade compliance audits including SOC 2, RBI IS Audit, and ISO 27001. Its AI-powered platform, AutoSecT, further enhances security through automated pentesting and real-time vulnerability monitoring—helping organisations stay protected as threats evolve.

Episode XXvii- Pavan Kushwaha, Founder of Kratikal

About ThreatCop

Threatcop AI Inc. is a People Security Management (PSM) company and a sister concern of Kratikal. Threatcop helps organisations reduce cyber risk by strengthening employee security posture—turning people from the weakest link into the strongest line of defence. With a focus on social engineering and email-led attacks, Threatcop drives measurable improvements in security behaviour and readiness. Serving 250+ large enterprises and 600+ SMEs across 30+ countries, Threatcop supports organisations across E-commerce, Finance, BFSI, Healthcare, Manufacturing, and Telecom. Threatcop follows the A-A-P-E framework (Assess, Aware, Protect, Empower) and delivers products such as TSAT, TLMS, TDMARC, and TPIR to address evolving threats. By reducing human error and improving day-to-day security decisions, Threatcop enables a lasting culture of cybersecurity awareness.

Track 2: Deployment & API Security (Labs 4, 5, 6): Focus on the application and delivery layer.

Session 2 – Lab four – 11:00 AM – 12:00 PM | Intezer | Automated Code Analysis and Incident Triage, A table-top exercise with Mitchem Boles

Genetic Analysis: Rapid Triage Training Objectives:

Current Risk: ASI05: Unexpected Code Execution (RCE), which builds on LLM05:2025 Improper Output Handling.

- 00-15 min: Threat Landscape: How attackers use AI to generate polymorphic malicious plugins.

- 15-45 min: Triage Race: Attendees use Intezer to analyze 5 unknown code samples to identify malicious intent.

- 45-60 min: Automating the response: Setting up “Genetic” signatures to block future variants.

The Educational “Hook”: Connecting LLM07 to AG09: Show how unverified plugin integration allows a malicious “agentic arm” to execute code under the guise of a trusted tool.

About Mitchem Boles:

Mitchem Boles is an executive and strategic Field CISO for Intezer. His experience includes leading Information Security for a large healthcare network, directing Security Operations for a major U.S. electric utility, and roles in security leadership for energy & critical infrastructure, MSSP Services, and consulting. He is a graduate of Texas A&M University; certified CISSP, CCSP, and AWS; and his background of expertise includes: security strategy, SOC deployment, AI strategic implementation, security operations and staff development, tools differentiation, and risk management.

About Intezer:

Intezer (intezer.com) is the leading Autonomous SOC platform designed to emulate the decision-making process of a human security analyst at machine scale. By leveraging proprietary Genetic Malware Analysis, Intezer automatically triages, investigates, and responds to every alert originating from an organization’s existing security stack (EDR, SIEM, and Phishing reports). Unlike traditional automation that relies on rigid playbooks, Intezer’s AI-driven platform identifies the “DNA” of code to distinguish between trusted software, known threats, and sophisticated new mutations. This allows security teams to automate over 90% of their Tier 1 and Tier 2 monitoring tasks, effectively eliminating alert fatigue while ensuring that critical incidents are triaged and remediated in seconds rather than hours.

Session 2 – Lab five – 11:00 AM – 12:00 PM | VeriaLabs | Find Your API Exploits Before They Do, Founders Stephen Xu and Caiden Liao

Training Objectives for Agentic Exploitation: Hijacking AI Tools in Real-Time:

- Primary Risks: AI-native offensive security testing for Sensitive Information Disclosure (LLM02) and PII Leakage (ASI-04)

- 00:00 – 00:10: Demonstrating the Lab setup, showing that what we’re finding so far is “Secure” by modern security tooling standards like SAST.

- 00:10 – 00:20: Platform overview

- 00:20 – 00:40: Exploitation Attempt

- 00:40 – 00:60: Exploitation Overviews and Tool Comparisons.

The Educational “Hook

- (Connecting LLM02 to AG04): Demonstrate how improper handling of sensitive data directly enables unauthorized exfiltration in the agentic stack.

- Your code passes every security scan, but your Agent just leaked the database. In this live-fire lab, we will demonstrate why static analysis fails to catch logic bugs and how Veria is able to catch even the trickiest bugs that expert pentesters miss.

About Stephen Xu

Stephen Xu is a Lead Security Researcher and Offensive AI expert. As Co-Founder of Veria Labs, he builds autonomous red-teaming agents that continuously test software for critical vulnerabilities. A competitive hacker by background, Stephen specializes in finding the logic flaws that static analysis tools miss—recently uncovering zero-day RCE exploits in major AI platforms.

About Cayden

Cayden Laio is a co-founder of Veria Labs, building AI hacking agents that automatically find and fix security vulnerabilities. He has competed extensively in Capture the Flag (CTF) competitions and is the current co-captain of the #1-ranked CTF team in the US for three consecutive years, with a world 3rd place in 2025. Cayden regularly competes in many of the world’s largest CTFs and is a two-time DEFCON CTF finals qualifier. When he was under 18 and ineligible to apply for the official U.S. Cyber Team, he instead joined as a coach and led the team to a 2nd place finish at the European Cybersecurity Championships.

About Veria Labs

Veria Labs is an AI-native offensive security platform focused on autonomous vulnerability discovery and exploitation. Founded by members of the #1 U.S. competitive hacking team, Veria Labs builds specialized AI agents that integrate directly into Git repositories and CI/CD pipelines to continuously analyze codebases for deep, complex vulnerabilities that traditional tools often miss. These agents operate at machine speed to identify exploitable flaws, generate real-world proof-of-concept exploits to validate risk, and reduce false positives. By combining autonomous discovery with context-aware remediation guidance, Veria Labs helps organizations shift offensive security left—enabling high-confidence validation of critical risks before they reach production.

Session 2 – Lab six – 11:00 AM – 12:00 PM | snyk | Keeping Your Agents on a Leash: Agentic guardrails, MCP Security, AI BOMs and Chatbot red-teaming, Developer Security, SCA, and Supply Chain Risk, Lab Leader Javier Garza

AI workshop: Keeping Your Agents on a Leash: Agentic guardrails, MCP Security, AI BOMs and Chatbot red-teaming, Developer Security, SCA, and Supply Chain Risk

Abstract: In this hands-on AI focused workshop you will learn how to: a) securely vibe coding using AI agentic coding tools like Cursor, Claude, Copilot, etc; b) how to detect Tool poisoning, Prompt injection risks, Toxic flow vulnerabilities in MCP servers using CLI tools; and c) how to do AI-focused red teaming against AI systems, LLM endpoints, and AI-powered APIs to uncover risks like jailbreaks, prompt injections, data leakage, and unsafe behaviors

Training Objective for Developer Security & Supply Chain – Securing the Agentic Supply Chain: From Code to Tooling:

- Primary Risks: [LLM01-03]: Prompt Injection, Sensitive Information Disclosure, Supply Chain / [ASI-01-05]: Agent Goal Hijack, Tool Misuse and Exploitation, Identity and Privilege Abuse, Agentic Supply Chain, Unexpected Code Execution

- Student Achievement: Participants will master the ability to install an MCP server, and generate MCP rules to put guardrails on AI-generated code; generate a Software Bill of Materials (SBOM) for agentic development; scan MCP servers for toxic flows and other vulnerabilities; and red-team Agents at runtime for prompt injections and other AI-related vulnerabilities.

- 00-15 min: Intros and Lab setup

- 15-30 min: Establishing security guardrails on agentic coding

- 30-40 min: Generating an AI Bill of Materials in JSON and generating a component Org chart

- 40-50 min: Scanning MCP Servers

- 50-60 min: Red-teaming a chatbot

The Educational “Hook”

- Hands-on workshop with free tools you can use to secure agentic code development. We will be using Cursor as the agentic code tool but you can apply the same principles to any other tools like Cline, Copilot, Gemini, etc.

Meet Your Lab Leaders:

About Javier Garza

Solutions Engineer at snyk, Javier Garza is a Technology evangelist who has written many articles on HTTP/2, security, and web performance, and is the co-author of the O’Reilly Book “Learning HTTP/2” (https://amzn.to/2TJbpUU). Javier has spoken at more than 30 events around the world, including well-known conferences like Velocity, AWS Re: Invent, and PerfMatters, and is the co-host of the San Francisco Bay Area DevSecOps Meetup group. His life’s motto is: share what you learn, and learn what you don’t. In his free time, he enjoys challenging workouts and volunteering with different non-profits.

About snyk

snyk (snyk.io): snyk is the leader in developer security, providing an enterprise-grade, multi-layered platform powered by the DeepCode AI orchestration engine to secure every component of the modern software supply chain. By combining symbolic AI with machine learning, Snyk delivers real-time vulnerability scanning and automated fix suggestions across source code (SAST), open-source dependencies (SCA), container images, and infrastructure as code (IaC). snyk’s technical edge lies in its curated vulnerability database and its ability to integrate directly into the developer workflow.

12:00 PM – 12:55 PM Lunch – Featuring Ike’s Love & Sandwiches

Exhibitors are asked to put in their orders to Hospitality early on Friday. Your student genius will pick up your food at 11:45 and bring it to your station. Here are the lunch menu options:

MEAT MADNESS:

- Matt Cain – Turkey, Roast Beef, Salami, Godfather Sauce, Provolone

- Barry B. – Turkey, Bacon, Swiss

- Ike’s Reuben – Pastrami, Purple Slaw, Mack Sauce, Gouda

- Menage a Trois – Chicken (Halal), Honey Mustard, BBQ Sauce, Real Honey, Pepper Jack, Cheddar, Swiss

- Da Vinci – Turkey, Ham, Salami, Italian Dressing, Provolone

- Jim Rome – Turkey, Avocado, Red Pesto, Cheddar

VEGGIE LOVERS:

- Sometimes I’m a Vegetarian – Mushrooms, Artichoke Hearts, Pesto, Provolone

- Persephone – Vegan Steak. Mushrooms, Yellow BBQ Sauce, Gouda

- Meatless Mike – Vegan Meatballs, Marinara, Pepper Jack

- Fall’ing for Ike’s – Vegan Turkey, Cranberry, Sriracha, Cheddar

- Handsome Owl – Vegan Chicken, Wasabi Mayo, Teriyaki, Swiss

- Pee Wee – Vegan Turkey, Purple Slaw, French Dressing, Swiss

VIVA LA VEGAN: All ingredients are vegan, including the mayo and cheese

- Vegan Sometimes I’m a Vegetarian – Mushrooms, Artichoke Hearts, Vegan Cheese

- Vegan Handsome Owl – Vegan Chicken, Teriyaki, Wasabi Mayo, Vegan Cheese

- Vegan Meatless Mike – Vegan Meatballs, Marinara, Vegan Cheese

- Vegan Fall’ing for Ike’s – Vegan Turkey, Cranberry, Sriracha, Vegan Cheese

- Vegan Pee Wee – Vegan Turkey, Purple Slaw, French Dressing, Vegan Cheese

SALAD: The Hanging Garden – Mushrooms, Marinated Artichoke Hearts,

Red Onions, Tomatoes, Dirty Croutons, with Ranch Dressing on the side.

Track 3: Runtime & Post-Exploitation Forensics (Labs 7, 8, 9): Focus on monitoring, response, and incident handling.

Session 3 – Lab seven – 1:00 PM – 2:00 PM | Stellar Cyber | Open XDR for AI Applications: Detection and Response | Daniel Cheng

Technical Objectives: Exploit to EDR: Open XDR Correlation

- Primary Risks: [LLM10: Unbounded Consumption] / [AG10: Resource Exhaustion]

- 00-15 min: A walk-through on the Stellar Cyber SecOps platform and seeing alerts being triggered in real time during an attack, observing the Correlation Analysis, and how to leverage the platform for quick investigation and response.

- 15-60 min: The 45 minutes will be hands-on and very interactive. Attendees will go through a Capture the Flag type session, identifying the initial attack, tracing through a series of attacks, see how the Correlation Engine automatically groups related alerts together, and how to leverage the platform for response action. Alerts triggered are sourced from multiple data sources such as EDR agent, Firewall, Windows events, etc…

The Educational “Hook”

- (Connecting LLM10 to AG10): Demonstrate how a logic loop in an agentic workflow creates a self-inflicted DoS through infinite resource recursion.

About Daniel Cheng

Daniel Cheng is the Head of North America Channel at Stellar Cyber, where he leads the strategy and execution of the company’s reseller ecosystem. With a strong focus on enablement, trust, and execution, Daniel works closely with partners to help them successfully adopt and scale Stellar Cyber’s AI-driven SecOps platform. Since taking on this role in early 2025, Daniel has played a key role in expanding channel awareness, developing a growing community of technical champions, and strengthening partner capabilities through certification and hands-on training. His work bridges business strategy and technical execution, ensuring partners are equipped to deliver real value to their customers. At the ISC2 East Bay Conference, Daniel will serve as Stellar Cyber Lab Leader, guiding participants through an interactive, hands-on session that demonstrates how a unified, open, and AI-powered SecOps platform can simplify threat detection, investigation, and response while improving operational efficiency and security outcomes.

About Stellar Cyber

Stellar Cyber (stellarcyber.ai) is the innovator of Open XDR, the only intelligent, next-generation security operations platform that provides high-fidelity detection and response across the entire attack surface. By unifying EDR, SIEM, and NDR into a single, AI-driven interface, Stellar Cyber eliminates the data silos that traditional security tools create, providing a comprehensive, correlated view of the entire threat landscape. The platform uses advanced machine learning and behavioral analytics to automatically detect sophisticated attacks, reduce alert fatigue for security analysts, and significantly accelerate incident response workflows through a single, integrated “pane of glass.”

Session 3 – Lab eight – 1:00 PM – 2:00 PM | Mavs AI | – GenAI Runtime Security, Amit Kharat, Manjul Kubde, Abhijit Tannu

Primary Risks: LLM 01: Prompt Injection, LLM 02: Sensitive Information Disclosure, LLM 07: System Prompt Leakage, and ASI 01: Agent Goal Hijack.

Training Objectives:

- Student Achievement: Participants will master the implementation of runtime guardrails to detect and block malicious injections and sensitive information disclosure in a live environment.

- 00-15 min: Presenting the Solution: Amit Kharat presents the runtime risk landscape and how the Mavs AI solution covers the primary LLM and ASI threats.

- 15-30 min: The Guided Walkthrough: Arya leads participants through the Mavs AI software, including the admin screens, using pre-configured security scenarios.

- 30-50 min: Stress-Testing the Guardrails: Participants use their own examples or provided industry-specific samples to attempt to bypass the Mavs AI security layer.

- 50-60 min: Feedback & Governance: Final wrap-up and collection of participant feedback via an online survey form.

Meet Your Lab Leaders:

About our Lab Leader, Amit Kharat: Amit is a cybersecurity entrepreneur and co-founder of Mavs AI, building next-generation runtime security for Generative AI use. With over a decade of experience in data-centric and enterprise security, Amit has played a foundational role in scaling global security platforms across BFSI, manufacturing, pharma, and government sectors. His work spans product strategy, go-to-market leadership, and deep customer engagement across North America, Europe, the Middle East, and India. At Mavs AI, Amit is focused on solving emerging GenAI risks, including sensitive data exposure, prompt-level attacks, and lack of runtime visibility, helping enterprises adopt AI with confidence and control.

About Manjul Kubde: Manjul Kubde is a builder at heart and a co-founder of Mavs AI, where she’s focused on one simple idea: enterprises should be able to use GenAI boldly, without losing sleep over security. At Mavs AI, she’s working hands-on to solve real problems like sensitive data leakage and prompt-level attacks, grounded in how systems actually break in the wild. Before this chapter, Manjul spent over 16 years at Seclore as co-founder and VP of Engineering, and earlier led engineering at Herald Logic. She’s known for calm decision-making, strong technical instincts, and a deep respect for craft, teams, and doing things the right way.

About Abhijit Tannu: Abhijit Tannu is the co-founder of Mavs AI, a platform helping enterprises adopt GenAI securely by preventing sensitive data leakage, prompt injection, and misuse, without slowing teams down. He brings over two decades of experience building enterprise cybersecurity products that win on trust and scale globally. An IIT Kharagpur alumnus, Abhijit co-founded Seclore and Herald Logic, where he helped grow businesses from the first customer to tens of millions of dollars in ARR, competing successfully with the largest global players. Deeply customer-focused, he has built product and Customer Success teams from scratch and worked hands-on with Fortune 500 customers across the US, Europe, the Middle East, and India.

About Mavs AI

Mavs AI (mavsai.com) is on a mission to help enterprises embrace Generative AI confidently by addressing the unique risks of a LLM / model-driven world. As organizations embed Large Language Models (LLMs) into day-to-day workflows, Mavs AI delivers real-time, in-the-loop security by monitoring every interaction to detect and prevent the leakage of sensitive data, such as PII and IP. Our LLM-agnostic engine acts as a specialized security layer that intercepts and analyzes prompts and responses, identifying risks like prompt injection and data exposure before damage is done. By enforcing policies across users, agents, and applications, Mavs AI enables organizations to maintain strict governance over their AI ecosystems without compromising performance. In an era where GenAI is transforming global productivity, Mavs AI ensures that this transformation happens securely, responsibly, and at scale.

Session 3 – Lab nine – 1:00 PM – 2:00 PM | Corelight | Network Detection and Response (NDR). Model Theft Defense: Providing Network Forensics for detecting unauthorized bulk data transfer/exfiltration, Mike Henkelman

Training Objectives:

- Primary Risks: [LLM07: System Prompt Leakage] / [ASI-07: Unauthorized Tool Execution]

- 00-15 min: The Network Signature of Model Theft: Seeing what the application logs miss.

- 15-45 min: Forensic Deep Dive: Analyzing network traffic to catch a “Model Weights” exfiltration attempt.

- 45-60 min: Setting up Corelight sensors to alert on unauthorized tool/data exfiltration in real-time.

The Educational “Hook” – (Connecting LLM07 to ASI-07): Demonstrate how network-level visibility catches system prompt leakage and exfiltration that application-layer logs are blind to.

Mike Henkelman is a Sr Solutions Engineer for Corelight with thirty years of technical experience that includes building enterprise data centers and NOCs for ISPs, doing edge router and switch development for Cisco, and running the Vuln Management and FedRAMP IL5 programs for VMWare. He frequently speaks at conferences such as DefCon and B-Sides.

About Corelight

Corelight (corelight.com) is the pioneer and fastest-growing provider of Open Network Detection and Response (NDR), delivering a unique approach to cybersecurity risk centered on comprehensive network evidence. As the only solution powered by the dual open-source foundations of Zeek® and Suricata—now enhanced by GenAI—Corelight provides deep visibility into network traffic by transforming raw data into high-fidelity logs, metadata, and actionable insights. By equipping elite defenders at the world’s most mission-critical enterprises and government agencies with this rich evidence, the platform enables rapid threat hunting, forensic analysis, and complete situational awareness across complex, distributed environments. Ultimately, Corelight helps security teams level up their defenses, accelerating investigations and dramatically reducing the time required to detect and neutralize sophisticated attacks and model theft.

CAKE BREAK & Networking – 2:00 – 3:00 PM Vendor Exhibits and Lab follow-up

Remember to return to the main presenter hall for the 3 PM Keynote

Following Neil Daswani’s Keynote, he will moderate a Panel Discussion – The Secure AI Revolution – Moving from Theory to Blueprint.

We have intentionally assembled a panel with unprecedented intellectual density. This cohort represents the full lifecycle of the industry—from the architect of the first software firewall to the engineers currently re-architecting identity for the cloud-native era. Their participation provides the “Engineering Reality” required to move our community from theoretical AI policy to a functional, high-scale blueprint.

Moderator: Dr. Neil Daswani (Keynote & Panelist) | Co-Academic Director, Stanford University / CISO-in-Residence, Firebolt Ventures | The Architect’s Journey: From Stanford PhD to CISO to Educator

Bio: Neil Daswani is the CISO-In-Residence at Firebolt Ventures and a Co-Director of the Stanford Advanced Cybersecurity Certification Program. He has served in a variety of research, development, teaching, and executive roles at QuantumScape, Symantec, LifeLock, Twitter, and Google. Neil has been both a security entrepreneur, having co-founded Dasient, which was acquired by Twitter, and has also served as a Chief Information Security Officer (CISO) at LifeLock, Symantec’s Consumer Business Unit, and QuantumScape. Neil holds a dozen U.S. patents and has published dozens of technical articles in top industry and academic conferences. He is also co-author of two books on cybersecurity: “Big Breaches: Cybersecurity Lessons for Everyone” and “Foundations of Security: What Every Programmer Needs to Know.” He earned Ph.D. and M.S. degrees in Computer Science at Stanford University, and he holds a B.S. in Computer Science with honors with distinction from Columbia University.

“Because Dr. Daswani has sat on every side of the table—as a Stanford researcher, a Silicon Valley founder, and the CISO of massive public enterprises—we’ve asked him to share a story about the moment he realized that academic ‘best practices’ had to evolve to survive the speed of the Secure AI Revolution.

We are also very privileged to have Neil leading and moderating this panel as the capstone of this absolutely brilliant day.

George D. DeCesare, Board of Directors, HITRUST | The Trifecta: Law Enforcement, Legal Authority, and Board-Level Governance

Bio: George D. DeCesare is a prominent leader in the cybersecurity and risk management landscape, currently serving on the Board of Directors for HITRUST. We have had the honor of George as the Keynote for our Cybersecurity in HITECH Conference at Zeiss, when he served as Senior Vice President and Chief Technology Risk Officer at Kaiser Permanente, where he was accountable for technology risk management for 12 million members and a massive enterprise workforce. George’s career spans over 20 years in healthcare, including roles as CISO and in-house counsel specializing in healthcare regulatory compliance, privacy, and cybersecurity law. Beyond his corporate executive tenure, George served as a Captain for the Los Angeles County Sheriff’s Department for 17 years. During his law enforcement career, he was a specialist investigator in identity theft and served on the Advisory Council on Computer Forensics. He is a member of the Healthcare Industry Cybersecurity Task Force formed by the U.S. Department of Health and Human Services and holds a Juris Doctorate from Northwestern California University School of Law and an MBA from Columbia Pacific University.

“Because George has looked at security through the eyes of a Law Enforcement Captain, a Regulatory Attorney, and now a HITRUST Board Director, we’ve asked him to share a story about the ‘Chain of Custody’ for truth in AI—specifically, how we ensure the integrity of our most sensitive data when the traditional rules of evidence and privacy are being rewritten at the Board level.”

Varun Prasad – Managing Director, BDO | The Auditor’s Lens: Establishing Verifiable Trust in Emerging AI

Bio: Varun Prasad is a managing director at BDO’s third-party attestation practice. In his current role, he works with tech companies to evaluate their cybersecurity posture and assess compliance with SOC 2 and various ISO standards to help them meet customer requirements and build trust with stakeholders. He focuses on complex and emerging security, privacy, cloud, and AI assurance requirements. Varun is an IT audit and risk management professional with 15+ years of progressive experience gained through various roles for Big4 firms and world-leading corporations across multiple geographies. He has managed and executed a variety of IT audit-based projects from end to end. He has provided various IT audit and assurance services, such as SOC 1/SOC 2 examinations, ISO 27001/42001/22301 audits, cybersecurity assessments, SOX testing, and privacy reviews. Varun is the VP of the ISACA SF chapter and part of ISACA’s IT Audit and Assurance Task Force.

“Because Varun sits at the intersection of Big4 rigor and the emerging world of AI assurance standards like ISO 42001, we’ve asked him to share a story about the ‘Trust Deficit’—specifically, how we move beyond marketing claims to create a verifiable, auditable blueprint for AI that actually stands up to a third-party assessment.”

Jules Okafor – CEO and Founder, Revolution Cyber | Strategic Human-Centered Security & Digital Trust

Bio: Jules Okafor, JD, is an attorney, best-selling author, and “Cyber Cultural Architect” and the Founder & CEO of RevolutionCyber and Obsidian Sanctum. At RevolutionCyber, she deploys Security Pods™ — fractional, AI-augmented teams that help high-growth companies operationalize security, AI governance, and trust without adding permanent headcount. Her work focuses on integrating and driving the adoption of SaaS security platforms, so they deliver real outcomes, not shelfware. Through Obsidian Sanctum, Jules advises executives and high-net-worth families on private digital protection and identity risk in an AI-accelerated world.

She believes the future of cybersecurity is quintessentially human — and that security must operate and drive revenue, not just audit activities.

“Because Juliet has built her career on the belief that the future of security is human and has advised global CXOs on operational excellence, we’ve asked her to share a story about ‘Strategic Enablement’—specifically, how we move a company culture from fear-based security to a model where AI is the foundation of digital trust.”

Bernard Brantley – CISO, Corelight | The Hunter’s Defense: High-Value Asset Protection & Threat Intelligence

Bio: Bernard Brantley is the CISO at Corelight. He joined Corelight from Amazon, where he led threat hunting and intelligence for Amazon Consumer Payments. Previously, he was the architect behind network security monitoring for Microsoft’s High Value Asset (HVA) environments, including XboxLIVE. He serves as an advisor to several tech companies and is a participant in federal workshops on AI strategy. He attended the U.S. Military Academy at West Point.

“Because Bernard has been the architect behind the defense of ‘High Value Assets’ at Microsoft and Amazon and now leads as CISO for Corelight, we’ve asked him to share a story about ‘The Hunter’s Instinct’—specifically, how we maintain the offensive advantage in threat intelligence when our adversaries are beginning to weaponize the same AI models we use for our defense.”

Rohit Valia – CEO and Founder, Tumeryk.com | The Infrastructure Pioneer: From the First Firewall to Enterprise AI

Bio: Rohit Valia is the CEO and Founder of Tumeryk.com, an enterprise AI security pioneer. His vast domain expertise spans fintech, cloud computing, AI/ML, and analytics. He has held leadership positions at FICO, IBM, Oracle, and Sun Microsystems. He led the development of the first software firewall (SunScreen Firewall), launched the world’s first pay-per-use infrastructure-as-a-service solution (Sun Grid), and led Product Management for the first Java 2EE certified application servers. He drove the successful transformation of the leading financial services AI/ML platform at FICO by crafting strategies to offer real-time SLAs for billions of financial transactions in the cloud.

“Because Rohit led the development of the world’s first software firewall and pioneered the first pay-per-use cloud infrastructure, we’ve asked him to share a story about ‘Generational Shifts’—specifically, how the lessons he learned securing the birth of the internet are the only things that will save us in the era of high-frequency, AI-driven financial transactions.”

Shashwat Sehgal – Founder and CEO, P0 Security | Identity Explosion & Engineering Reality: Securing Machines at Scale

Bio: Shashwat Sehgal is the Founder and CEO of P0 Security, re-architecting Privileged Access Management (PAM). He specializes in automating access for the “identity explosion” within production stacks. Previously, at Splunk, he led OpenTelemetry initiatives for microservices, and at Cisco Meraki, he used ML for automated network troubleshooting. He is an IIT-Delhi and Columbia Business School graduate.

“Because Shashwat has lived the ‘Engineering Reality’ of scaling deep-tech at Splunk and Meraki, we’ve asked him to share a story about the ‘Identity Explosion’—specifically, why our current models of privileged access are failing when faced with the sheer scale of machine-to-machine agents that AI is now introducing to the production stack.”

Let’s Grow – 4:45 – 5:00 PM – Conference Feedback Forms, and planting your seedlings in porcelain and earth. Happy Earth Day, from all of us at ISC2 East Bay Chapter.

To receive all 8 CPEs, attendees must complete their Conference Feedback Form. Volunteers and Presenters can claim additional CPE for their preparation and planning participation. https://forms.gle/kFQwuTdg9PQJ2rBt7

Meet our Distinguished Guests and enjoy pictures from our blooming March Events

Meet our Sponsors

Platinum Sponsors

- Abnormal AI (abnormal.ai) is the leading AI-native human behavior security platform, leveraging machine learning to stop sophisticated inbound attacks and detect compromised accounts across email and connected applications. The anomaly detection engine leverages identity and context to understand human behavior and analyze the risk of every cloud email event—detecting and stopping sophisticated, socially-engineered attacks that target the human vulnerability.

- Astrix Security (astrix.security) is the industry’s first non-human identity (NHI) security platform, purpose-built to help enterprises secure the vast and often invisible web of service accounts, API keys, and OAuth tokens that power the modern automated enterprise. As organizations shift toward agentic AI and autonomous workflows, Astrix provides the only holistic solution for the entire NHI lifecycle—from automated discovery and risk assessment to real-time remediation. By securing SaaS-to-SaaS connectivity and managing the “Action Layer” where AI agents interact with corporate data, Astrix prevents unauthorized access and ensures operational continuity in an increasingly connected world.

- HiddenLayer (hiddenlayer.com) HiddenLayer’s AI Security Platform secures agentic, generative, and predictive AI applications across the entire lifecycle, including AI discovery, AI supply chain security, AI attack simulation, and AI runtime security. Backed by patented technology and industry-leading adversarial AI research, HiddenLayer protects IP, ensures compliance, and enables safe adoption of AI at enterprise scale.

- Intezer (intezer.com) is the leading Autonomous SOC platform designed to emulate the decision-making process of a human security analyst at machine scale. By leveraging proprietary Genetic Malware Analysis, Intezer automatically triages, investigates, and responds to every alert originating from an organization’s existing security stack (EDR, SIEM, and Phishing reports). Unlike traditional automation that relies on rigid playbooks, Intezer’s AI-driven platform identifies the “DNA” of code to distinguish between trusted software, known threats, and sophisticated new mutations. This allows security teams to automate over 90% of their Tier 1 and Tier 2 monitoring tasks, effectively eliminating alert fatigue while ensuring that critical incidents are triaged and remediated in seconds rather than hours.

- Netskope (netskope.com) is a leader in modern security, networking, and analytics for the cloud and AI era. The unique architecture of its Netskope One platform enables real-time, context-based security for people, devices, and data wherever they go, and optimizes network performance—without trade-offs or sacrifices. Thousands of customers and partners trust the Netskope One platform, its patented Zero Trust Engine, and its powerful NewEdge Network to reduce risk, simplify converged infrastructure, and provide full visibility and control over cloud, AI, SaaS, web, and private application activity.

- Stellar Cyber (stellarcyber.ai) is the innovator of Open XDR, the only intelligent, next-generation security operations platform that provides high-fidelity detection and response across the entire attack surface. By unifying EDR, SIEM, and NDR into a single, AI-driven interface, Stellar Cyber eliminates the data silos that traditional security tools create, providing a comprehensive, correlated view of the entire threat landscape. The platform uses advanced machine learning and behavioral analytics to automatically detect sophisticated attacks, reduce alert fatigue for security analysts, and significantly accelerate incident response workflows through a single, integrated “pane of glass.”

Gold Sponsors

- Corelight (corelight.com) is the pioneer and fastest-growing provider of Open Network Detection and Response (NDR), delivering a unique approach to cybersecurity risk centered on comprehensive network evidence. As the only solution powered by the dual open-source foundations of Zeek® and Suricata—now enhanced by GenAI—Corelight provides deep visibility into network traffic by transforming raw data into high-fidelity logs, metadata, and actionable insights. By equipping elite defenders at the world’s most mission-critical enterprises and government agencies with this rich evidence, the platform enables rapid threat hunting, forensic analysis, and complete situational awareness across complex, distributed environments. Ultimately, Corelight helps security teams level up their defenses, accelerating investigations and dramatically reducing the time required to detect and neutralize sophisticated attacks and model theft.

- Exiger (exiger.com) is a global leader in AI-powered supply chain risk management, helping organizations illuminate and protect their extended enterprise and third-party ecosystems. Through its proprietary 1Exiger platform, the company provides real insights into financial health, geopolitical exposure, and ESG risks, enabling proactive management of the complex dependencies that define modern global commerce.

- RevolutionCyber (revolutioncyber.com) is a boutique cybersecurity and resilience consulting firm that blends strategic advisory, cultural transformation, and technology enablement to redefine how organizations approach security. They focus on aligning security with core business outcomes, such as resilience, trust, and revenue generation, rather than treating it as a standalone technical function, offering services that enhance security culture and prepare for rapid incident response.

- Sepio (sepiocyber.com) provides a Hardware Access Control (HAC) platform that offers visibility and control over all hardware assets utilizing physical layer fingerprinting. By using machine learning to analyze device behavior at the physical layer, Sepio identifies rogue devices and malicious hardware implants that bypass traditional security controls, ensuring the integrity of IT, OT, and IoT environments.

- Snyk (snyk.io) is the leader in developer security, providing an enterprise-grade, multi-layered platform powered by the DeepCode AI orchestration engine to secure every component of the modern software supply chain. By combining symbolic AI with machine learning, Snyk delivers real-time vulnerability scanning and automated fix suggestions across source code (SAST), open-source dependencies (SCA), container images, and infrastructure as code (IaC). Snyk’s technical edge lies in its curated vulnerability database and its ability to integrate directly into the developer workflow, enabling security leaders to implement global risk policies while empowering engineering teams to remediate security debt without sacrificing deployment velocity. By bridging the gap between security and development, Snyk provides the scalability, visibility, and auditability required for large-scale digital transformations and secure AI adoption.

Silver Sponsors

- BalkanID (balkanid.com) provides modular, AI-assisted identity security and access governance (IGA) solutions designed to work with both connected and disconnected applications. Its platform streamlines critical tasks such as user access reviews, lifecycle automation with purpose-based just-in-time access, and identity security posture management (ISPM)—including IAM risk and RBAC analysis and an AI Copilot feature. By empowering organizations to enforce least privilege principles, BalkanID enables enterprises to efficiently manage complex identity risks at scale.

- Happiest Minds Technologies (happiestminds.com) is an AI-led, digital engineering and “Mindful IT” company that delivers secure, scalable solutions spanning from chip to cloud. By integrating deep expertise in Gen AI with core capabilities in product engineering, cybersecurity, and automation, Happiest Minds helps enterprises across BFSI, Healthcare, and Hi-Tech fast-track their digital evolution. Their innovation-led strategy is supported by strategic partnerships with AWS and Microsoft, as well as a growing portfolio of proprietary platforms like Arttha and FuzionX.

- Horizon3.ai (horizon3.ai) provides NodeZero, an autonomous penetration testing platform. It continuously assesses an organization’s internal and external attack surface, automatically discovers exploitable weaknesses, and verifies vulnerabilities without human intervention. By rigorously emulating real-world attacker behaviors and techniques, NodeZero identifies critical attack pathways and provides clear, actionable remediation steps to proactively strengthen security posture and continuously validate an organization’s defenses against evolving cyber threats, supporting a continuous security validation program.

- Illumio [illumio.com]: Provides Zero Trust Segmentation to prevent the lateral movement of breaches across complex hybrid environments, including data centers, multi-cloud infrastructures, and endpoints. It meticulously visualizes application dependencies and communication flows, micro-segments networks down to individual workloads, and enforces granular, adaptive policies to contain attacks. This approach dramatically minimizes breach impact by reducing the attack surface and significantly enhancing an organization’s overall cyber resilience and security posture.

- Mavs AI (mavsai.com) delivers smart guardrails, intelligent policy control, and real-time visibility for every GenAI interaction. They address specific risks like PII and sensitive data shared in prompts, prompt injections compromising systems, and misuse by business users or rogue application users. Mavs AI closes the gap where innovation outpaces safety guardrails, enabling enterprises to innovate fearlessly and scale AI responsibly.

- NetAlly (netally.com) offers portable network testing and analysis solutions essential for IT and cybersecurity professionals managing complex infrastructures. Its suite of tools provides deep visibility into both wired and wireless networks, enabling efficient troubleshooting of connectivity issues, precise validation of network performance, and verification of security configurations. This comprehensive approach helps ensure reliable network infrastructure, reduces downtime, and facilitates rapid issue resolution for enhanced operational stability and secure network operations.

- One Identity (oneidentity.com) delivers a unified identity security platform that provides comprehensive identity and access management (IAM) solutions across an organization’s entire digital landscape. By bridging the gap between Identity Governance and Administration (IGA) for user lifecycle management, Privileged Access Management (PAM) for securing elevated accounts, and Access Management for secure authentication, One Identity provides a holistic, identity-centric approach to security. This unified platform helps organizations manage identities, govern access, and secure privileged accounts while streamlining identity lifecycles and enforcing least privilege principles. Ultimately, One Identity enables enterprises to improve their compliance posture and strengthen overall security across complex, hybrid IT environments.

- P0 Security (p0.dev) is helping companies modernize PAM for multi-cloud and hybrid environments with the most agile way to ensure least-privileged, short-lived, and auditable production access for users, NHIs, and agents. Centralized governance, just-enough-privilege, and just-in-time controls deliver secure access to production, as simply and scalably as possible. P0’s Access Graph and Identity DNA data layer make up the foundational architecture that powers privilege insights and access control across all identities and production resources, including the new class of AI-driven agentic workloads emerging in modern environments.

- Redblock’s Agentic AI (redblock.ai) automates identity and security workflows across disconnected apps — extending SailPoint and other identity systems for full coverage. It connects what Identity systems can’t, eliminates CSVs and IT tickets, and automates actions safely with policy guardrails. The result: a smaller identity attack surface in days, not months. Manual workflows become autonomous, auditable actions.

- StrongDM (strongdm.com), founded in 2015, offers a unified Zero Trust Access platform for managing and auditing access to all critical infrastructure, including databases, servers, Kubernetes clusters, and web applications. By connecting users securely without the need for traditional VPNs, StrongDM centralizes control over technical access and meticulously logs every session with precision for comprehensive auditing and compliance. The platform enforces granular, least-privilege access policies in real-time, significantly enhancing security posture while streamlining compliance workflows. Secure-by-design and boasting a 98% customer retention rate year-over-year, StrongDM is focused on making life easier and more operationally effective for technical experts by improving efficiency across complex, distributed environments.

- ThreatCop (threatcop.com) Threatcop AI Inc. is a People Security Management (PSM) company and a sister concern of Kratikal. Threatcop helps organisations reduce cyber risk by strengthening employee security posture—turning people from the weakest link into the strongest line of defence. With a focus on social engineering and email-led attacks, Threatcop drives measurable improvements in security behaviour and readiness. Serving 250+ large enterprises and 600+ SMEs across 30+ countries, Threatcop supports organisations across E-commerce, Finance, BFSI, Healthcare, Manufacturing, and Telecom. Threatcop follows the A-A-P-E framework (Assess, Aware, Protect, Empower) and delivers products such as TSAT, TLMS, TDMARC, and TPIR to address evolving threats. By reducing human error and improving day-to-day security decisions, Threatcop enables a lasting culture of cybersecurity awareness.

- Veria Labs (verialabs.com) provides an AI-native offensive security platform designed for autonomous vulnerability discovery and exploitation. Founded by members of the #1 US competitive hacking team, Veria Labs builds specialized AI agents that integrate directly into Git repositories and CI/CD pipelines to analyze codebases continuously. These agents operate faster than human researchers, finding deep, complex vulnerabilities that traditional tools miss and generating real-world exploit PoCs to verify risk and eliminate false positives. By adapting to business logic and providing automated remediation, Veria Labs shifts offensive security left, enabling organizations to validate their security posture and secure critical vulnerabilities with high confidence and at machine speed.

Note that sponsors Astrix, Balkan, StrongDM, and One Identity are scheduled to be at the November Conference on Identity and will not or may not be among the March Exhibitors. Some sponsors, Exiger, Sepio, The Good Data Factory, and Summit7, have been able to coordinate an exhibit; they are family to the Chapter and welcome at anytime. We will accommodate all sponsors who commit by Feb 28th with a 6-foot table, space for one pop-up, a student staff for the day, a dinner ticket, and two conference tickets for attendance and coverage at their exhibitor table. Gold and Platinum sponsors have priority placement and are encouraged to place an additional pop-up in the Presentation Hall. Space for the March event was finalized in January of 2026. November 2026 is nearly sold out.

Please become an ISC2 East Bay Sponsor by donating today.

About ISC2

ISC2 is the world’s leading member organization for cybersecurity professionals, driven by our vision of a safe and secure cyber world. Our nearly 675,000 members, candidates, and associates around the globe are a force for good, safeguarding the way we live. Our award-winning certifications – including cybersecurity’s premier certification, the CISSP® – enable professionals to demonstrate their knowledge, skills, and abilities at every stage of their careers. ISC2 strengthens the cybersecurity profession’s influence, diversity, and vitality through advocacy, expertise, and workforce empowerment, accelerating cyber safety and security in an interconnected world. Our charitable foundation, The Center for Cyber Safety and Education, helps create more access to cyber careers and educates those most vulnerable. Learn more and get involved at ISC2.org. Connect with us on X, Facebook, and LinkedIn.